There is a concept in tech called a ‘boring infrastructure win’ — something unsexy that quietly changes everything. MCP, the Model Context Protocol, is 2025’s version of that. You will not find it trending on social media, but if you are building anything with AI, it is the most important standard to understand right now.

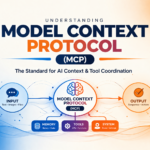

What Is MCP?

MCP is an open protocol, developed by Anthropic and now widely adopted, that standardises how AI applications connect to external tools, data sources, and services. Before MCP, every AI integration was bespoke — you would write custom code to connect your LLM to your CRM, then more custom code to connect it to your database, then more for your calendar. A mess of one-off pipes.

MCP created a universal client/server architecture so that an AI application can connect to any MCP-compliant server in the same standard way. Connect once to the protocol; connect to everything.

Why It Matters for Agentic AI

AI agents need to interact with the world — search the web, read files, query databases, send messages, call APIs. Before MCP, this required custom tool integration for every capability. With MCP, an agent can dynamically discover and use any available tool through a standard interface. This is what makes multi-step, multi-tool agents actually practical to build.

By mid-2025, MCP had become what Splunk described as ‘the universal protocol for AI-native APIs.’ Major platforms — from Slack to Salesforce to GitHub — published MCP servers, meaning AI assistants could connect to the enterprise software stack without months of integration work.

How It Works (Simply)

An MCP server exposes tools and resources through a defined interface. The AI client connects to servers and can call their tools. The LLM decides which tools to use based on context — it sees a list of available tools and their descriptions, reasons about which are relevant, and invokes them.

The beauty is that the LLM does not need to be retrained every time a new tool is added. Just connect a new MCP server and the AI can immediately use it.

Real Examples

A developer using Claude with MCP can connect their coding assistant to GitHub (to read and modify repositories), their terminal (to run commands), their database (to run queries), and their documentation (to search internal knowledge) — all through a standard protocol. The AI can then read a bug report, look up the relevant code, run the failing tests, write a fix, and open a pull request. End to end. No custom plumbing for each step.

The Broader Impact

MCP is doing for AI what USB did for devices. Before USB, every peripheral used a different connector. USB standardised it, and hardware innovation accelerated because you could plug anything into anything. MCP is doing the same for AI tool integration. The speed of innovation in agentic systems in 2025 is partly a consequence of not having to rebuild the integration layer from scratch every time.

FAQs

Is MCP only for developers?

Currently, yes — setting up MCP servers and clients requires technical knowledge. But no-code tools that abstract this layer are emerging, and within 12-18 months it is likely that consumer AI products will handle MCP connections transparently.

Is MCP secure?

Security depends on implementation. The protocol itself has provisions for authentication and access control, but how individual MCP servers implement security varies. Enterprise deployments need careful review of what permissions each server has.

Bottom line: MCP is the plumbing that makes the agentic AI vision real. If you are building AI applications in 2025, learning this protocol is not optional — it is foundational.